Let P and Q be the distributions shown in the table and figure. Because work is obtained from ordered molecular motion, the amount of entropy is also a measure of the molecular disorder, or randomness, of a system. The resulting method differs signif- icantly from previous policy gradient.

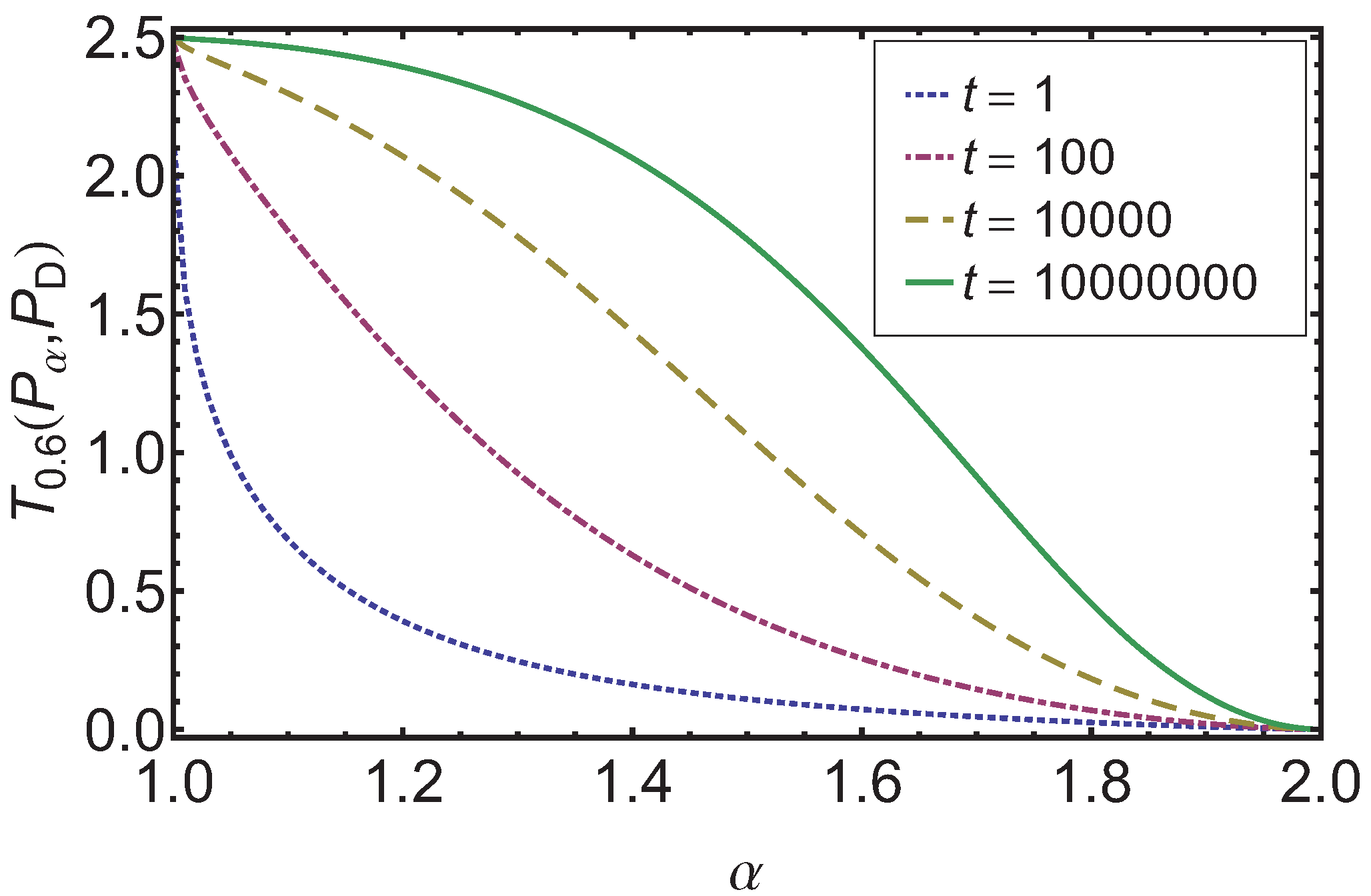

Kullback gives the following example (Table 2.1, Example 2.1). entropy, the measure of a system’s thermal energy per unit temperature that is unavailable for doing useful work. path of reasoning and suggest the Relative Entropy Policy. Finally, substances with strong hydrogen bonds have lower values of S°, which reflects a more ordered structure.In mathematical statistics, the Kullback–Leibler divergence (also called relative entropy and I-divergence ), denoted D KL ( P ∥ Q ). Unlike Relative Entropy, Mutual Information is symmetric. For f, g Ax, we define the Kullback-Leibler (KL). It is the reduction in the uncertainty of one random variable due to the knowledge of the other. For example, compare the S° values for CH 3OH(l) and CH 3CH 2OH(l). Information gain and relative entropy, used in the training of Decision Trees, are defined as the ‘distance’ between two probability mass distributions p(x) and q(x). Relative entropy is also known as Kullback-Leibler divergence 27. Similarly, the absolute entropy of a substance tends to increase with increasing molecular complexity because the number of available microstates increases with molecular complexity. Soft crystalline substances and those with larger atoms tend to have higher entropies because of increased molecular motion and disorder. Entropy maximization (Ma圎nt) is a general approach of inferring a probability distribution from constraints which do not uniquely characterize that distribution. In contrast, graphite, the softer, less rigid allotrope of carbon, has a higher S° due to more disorder in the crystal. A detailed discussion of information-based methods is given in Ulrych & Sacchi (2006), specifically Bayesian inference, maximum entropy and minimum relative entropy (MRE). Among crystalline materials, those with the lowest entropies tend to be rigid crystals composed of small atoms linked by strong, highly directional bonds, such as diamond. The default option for computing KL-divergence between discrete probability vectors would be. It has diverse applications, both theoretical, such as characterizing the relative. The KL divergence, which is closely related to relative entropy, informa-tion divergence, and information for discrimination, is a non-symmetric mea-sure of the dierence between two probability distributions p(x) and q(x).

\( \newcommand\) also reveals that substances with similar molecular structures tend to have similar S° values. Relative entropy is a nonnegative function of two distributions or measures.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed